Programming with ChatGPT

Rather than perform an exercise just for the sake of it, like writing fortunes for fortune cookies, which it does successfully in under a minute, I waited for an opportunity to have ChatGPT write a program that really mattered to me.

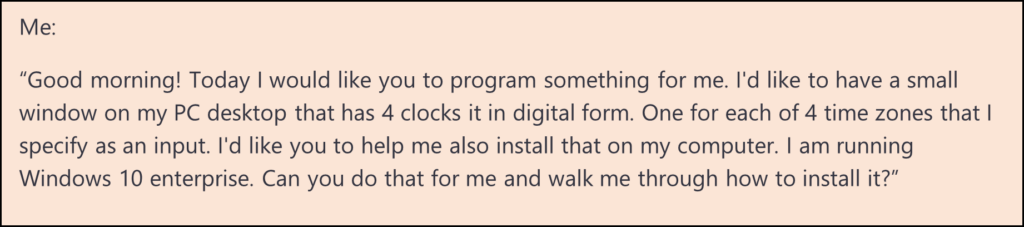

For example, as I’ve been working with people from Singapore, Australia, Stockholm, and San Francisco, I’ve found myself constantly messing up my time zones, and embarrassingly signing onto Zoom at all the wrong times. So, I asked ChatGPT:

I learned a great deal from this exercise – the good, the bad and the ugly. In the process, I have both a new appreciation for what it can do, as well as very sobering realizations of what it can’t.

ChatGPT quickly responded with a Python program which, thanks to having programmed at a rudimentary level before, I was able to install and run with ChatGPT’s guidance. The program, like the fortune cookie program, worked the first time. It put up 4 digital clocks on my desktop and helped me get it to startup on reboot. This fit all my fantasies and fears of what it is capable of. Here I was, telling it what I wanted, and it performed. Instead of defining a product spec, block diagramming it, programming it with care taken to make it readable, commented, and debuggable, testing it, debugging it, and finalizing it, I could leave all the details up to the AI, and get on with my work. And it cost me near nothing to do it – no programmers, no meetings, no time. This is the makings of many job replacements.

Then…, the engineer in me emerged. Maybe I would like analog clocks instead. That would make it nicer. It obliged, but just the hands. So, I asked it to put clock faces on it. Then put it in the corner, shade the faces that displayed night hours, make the cities changeable, put AM and PM on it, make a little digital time for my local time, and… I think you get the idea. I wanted it exactly customized to my taste that I was making up in real time.

Whereas the first task above took merely minutes, it took 20 hours or more of coaxing to make it perfect. In that process, it did things well, made mistakes, corrected itself (see the title of this piece), and got itself completely confused before I started over by handing it the partially working code and asked it what was wrong.

Like with Google Maps when you make a wrong turn, it is very accommodating and infinitely patient. It is also very apologetic (a bit too much), so every time I told it the program didn’t work or it didn’t produce what I wanted:

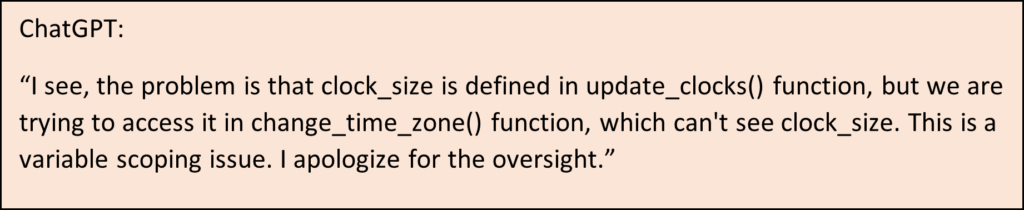

But it showed quite an awareness of some mistakes it made too:

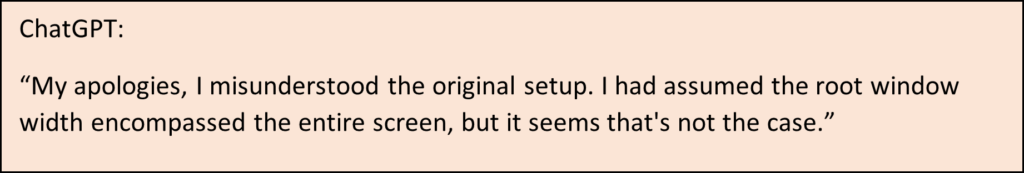

And

During this foray and others like it, we both got confused as to what we changed and what was original, what was working and what wasn’t, and where to go from here. Sounds a lot like human programmers, right? But three times, I simply started a new chat, pasting the code as a prompt with a statement of what my intent was with the program. In all cases, it analyzed it, explained what was wrong, suggested changes to fix it, and we were off and running again.

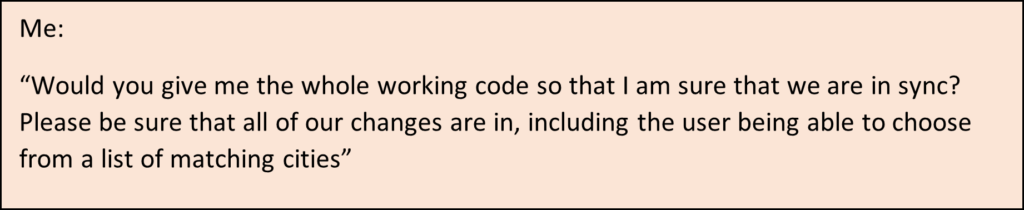

Interestingly, however, toward the end when I finally was wrapping up, I asked:

And it responded:

Should I now be mad at it since it was being insubordinate? Why wouldn’t it do this?

I did finish the program to my complete satisfaction.

While one experience is too little to judge all LLM’s, here are my take-aways about the experience:

It worked. ChatGPT is a capable programmer with the ability to come up with algorithms to solve problems, implement those and debug it when it doesn’t work.

It makes lots of mistakes. Very human-like, it tries things that on the surface seem to be correct, only to find that it doesn’t quite work that way. Sometimes it doesn’t know it is making errors, or the functions it relies on don’t do what it expected. When you point out what is not working, however, it is very capable of fixing it. Interestingly, sometimes it admits to doing something wrong, and can fix it. Why it made those mistakes in the first place is a puzzle. Like we are taught in grade school, “Check your work!”. It could have been fixed before producing the wrong answer in those cases.

It is very, very polite. Patient and apologetic. After the first couple of times, maybe a bit insipid?

I learned a lot about coaxing ChatGPT into doing what I want. I learned a bit more programming techniques, available functions, and an organization process that I employed. It was, at the risk of anthropomorphizing, fearless. It makes me want to just do it – just take risks, solve the problem, and don’t think too much. It is always fixable if it doesn’t work, which is what ChatGPT often did.

Mostly, I found that today it worked like a partner programmer that made it easy to perfect what I wanted while I spoke to it in a stream of conscious method. I don’t have to spend hours writing a detailed spec, then delivering that just to find that it wasn’t exactly what I wanted. Indeed, it would have been difficult to justify that for a clock! ChatGPT worked side by side with me, doing the heavy lifting while I kept getting more specific and changing my mind on what I wanted.

Clearly, this is the beginning. It does have a way to go. It may take years to get to the point of building complex programs without making mistakes, but you can feel it coming. And in a polite and accommodating way.

When asked to comment on this article, ChatGPT responded:

I am not so sure business leaders will continue to see it just as an assistant as it gets capable of delivering completed tasks. But that is a great politically correct message.

Thanks, GPT. And I’ve been on time to meetings ever since.